Ford Nuclear Reactor VR

A high-fidelity VR simulation of the University of Michigan's decommissioned Ford Nuclear Reactor.

Project Summary

Work Type: Educational VR for UM School of Engineering Students

Duration: 9 months (2020 to 2021)

Skill Categories: Virtual Reality Development, XR Experience Design, User Experience Design, Interaction Design, Technical Art and Shader Development, Simulation Integration, Game Engine Development (Unreal Engine 4), State-Based Architecture, Instructional Design, Immersive Learning, Real-Time Rendering Optimization

The Purpose

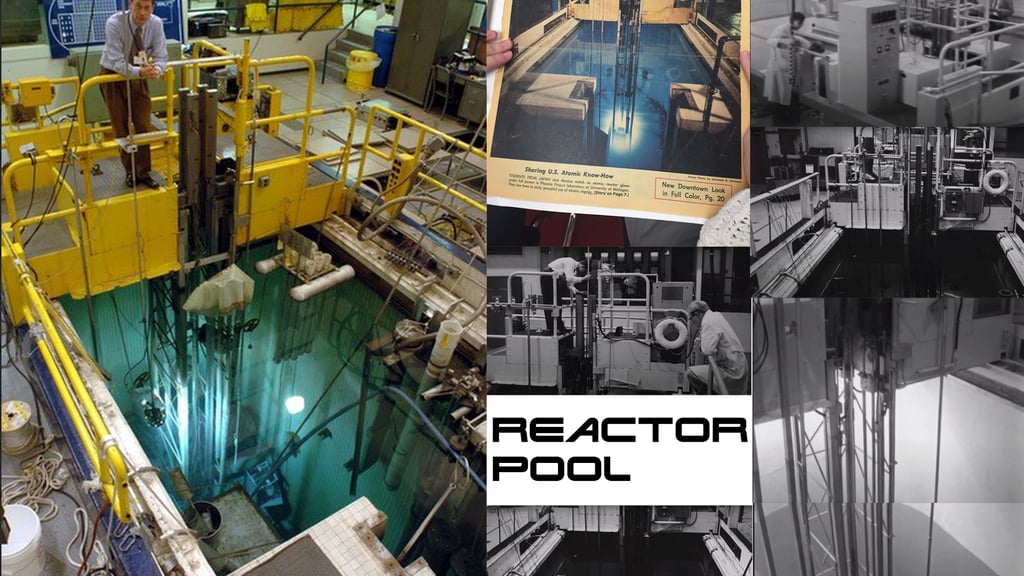

The Ford Nuclear Reactor (FNR) was a 2-megawatt pool-type research reactor that operated at the University of Michigan from 1957 until its permanent shutdown and decommissioning in 2003. For decades, it was the backbone of NERS 445, a hands-on nuclear reactor laboratory course in the Department of Nuclear Engineering and Radiological Sciences (NERS). When the reactor was decommissioned, so was the course.

This project was born from a faculty-led initiative to bring that experience back. The goal was to build a photorealistic, physics-accurate VR simulation of the FNR that would allow nuclear engineering students to explore the reactor floor, interact with authentic control systems, and perform real nuclear experiments without radiation exposure, operational risk, or access to a physical facility.

The simulation was designed to serve senior undergraduates and graduate students in NERS, with future potential to expand to introductory students and K-12 audiences as an immersive science demonstration.

As Lead UX Designer and Developer, I owned the full experience from initial concept through deployment and handoff, including user experience design, interaction architecture, onboarding systems, experiment framework engineering, shader development, performance optimization, and stakeholder collaboration.

The Process

Research and Collaboration with Domain Experts

The project began with deep collaboration with NERS faculty and their PhD students. Because accuracy was non-negotiable for an educational science application, early phases were research-intensive. We studied original blueprints and archival photographs of the FNR to reconstruct the physical environment at true scale. Faculty provided the nuclear simulation data in the form of Functional Mock-up Units (FMUs), which modeled authentic reactor behavior using MATLAB and Simulink.

This close partnership between XR developers and domain scientists shaped every design and development decision that followed.

Environment Reconstruction and Technical Art

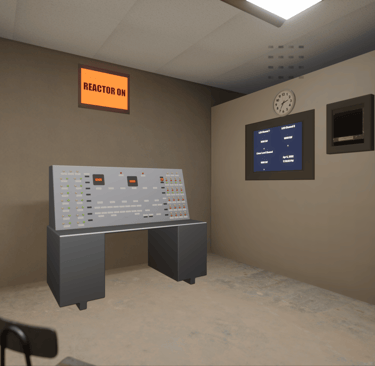

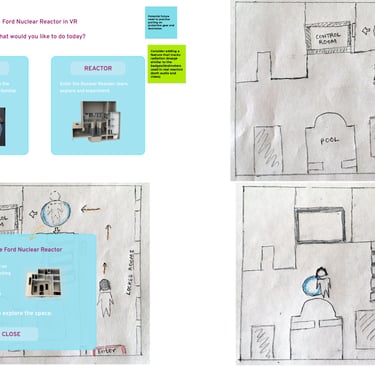

The entire reactor floor, control room, and reactor pool were modeled from historical documentation and photographs to match the original facility. PhD students contributed 3D assets, which I integrated into Unreal Engine 4.27 and prepared for real-time VR rendering.

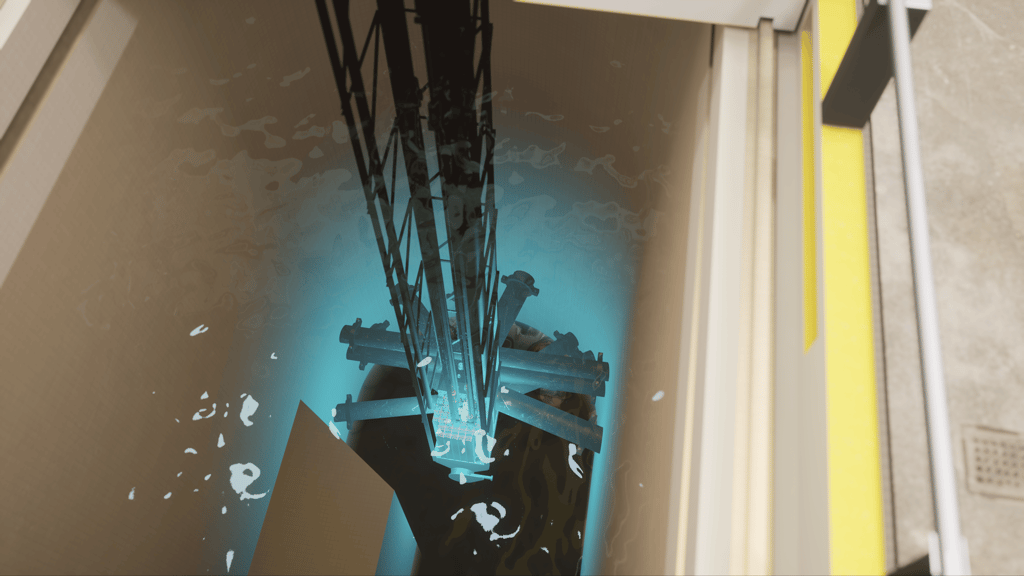

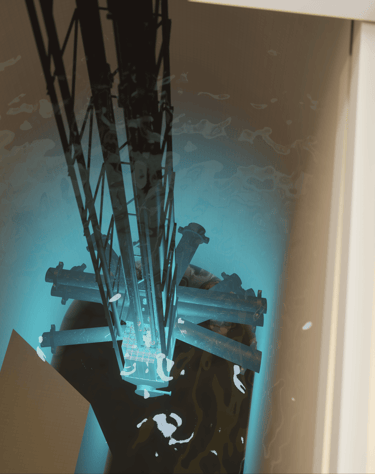

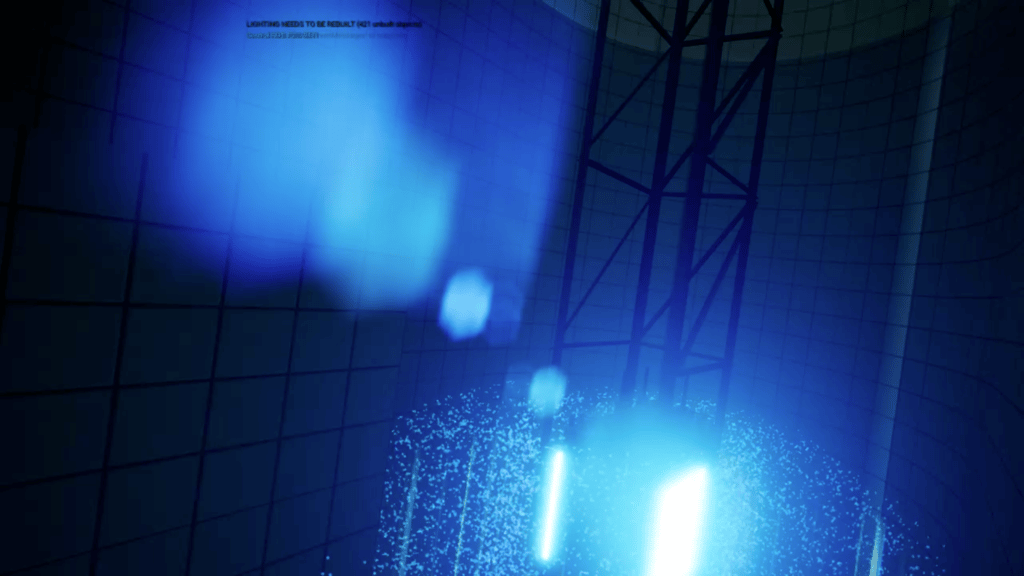

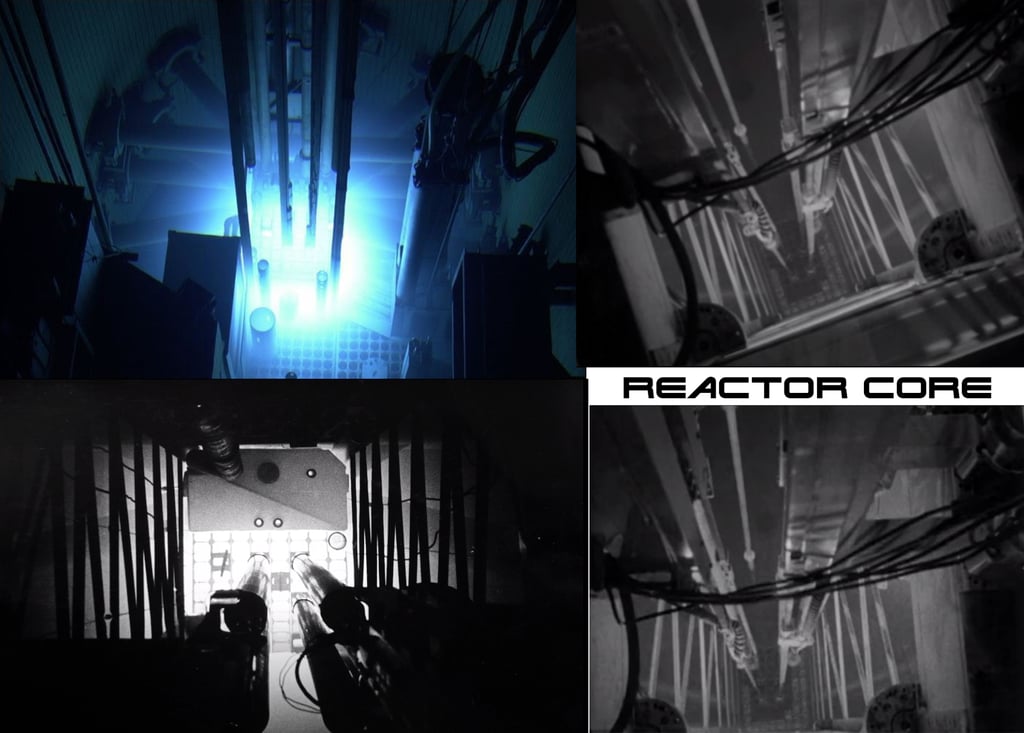

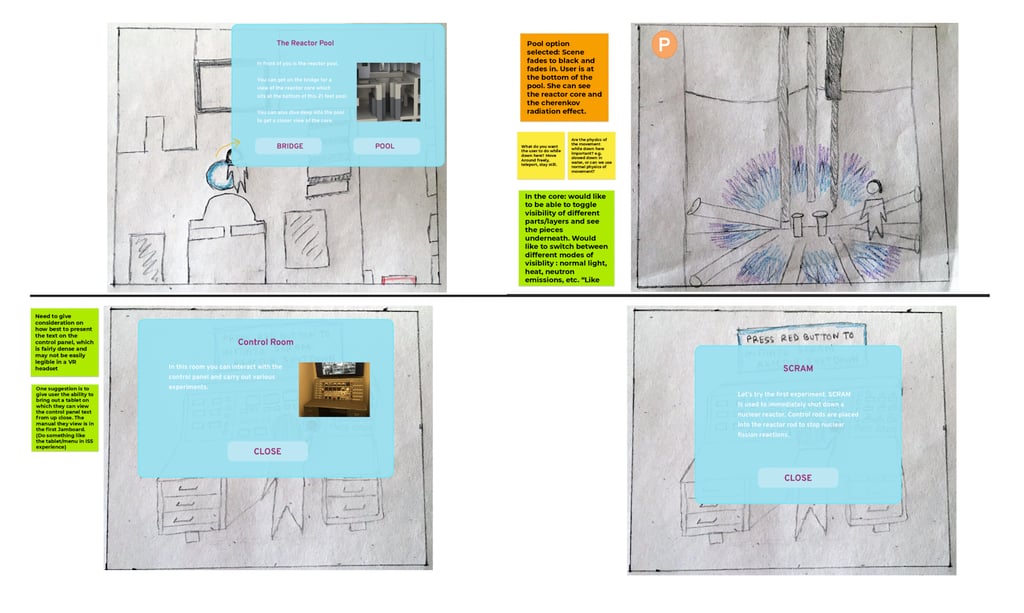

One of the most technically demanding aspects of this project was the reactor pool underwater environment. Simulating the iconic Cherenkov radiation glow, underwater caustic light patterns, and volumetric water visuals while maintaining performance above 60 frames per second required building custom materials from scratch. This was a genuine technical art challenge that pushed the boundaries of what was achievable on tethered Windows Mixed Reality hardware (HP Reverb headsets), and it became one of the most visually celebrated elements of the experience.

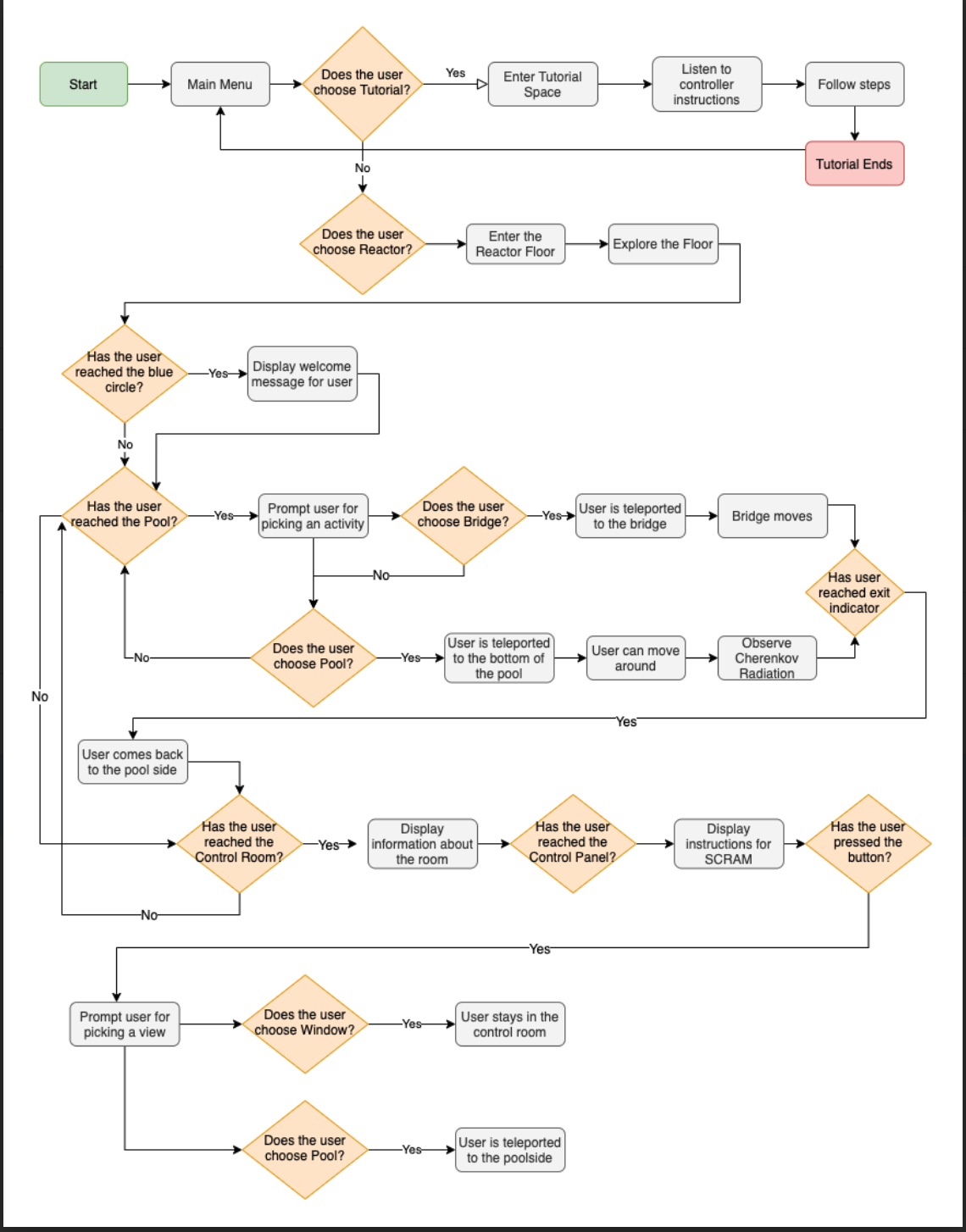

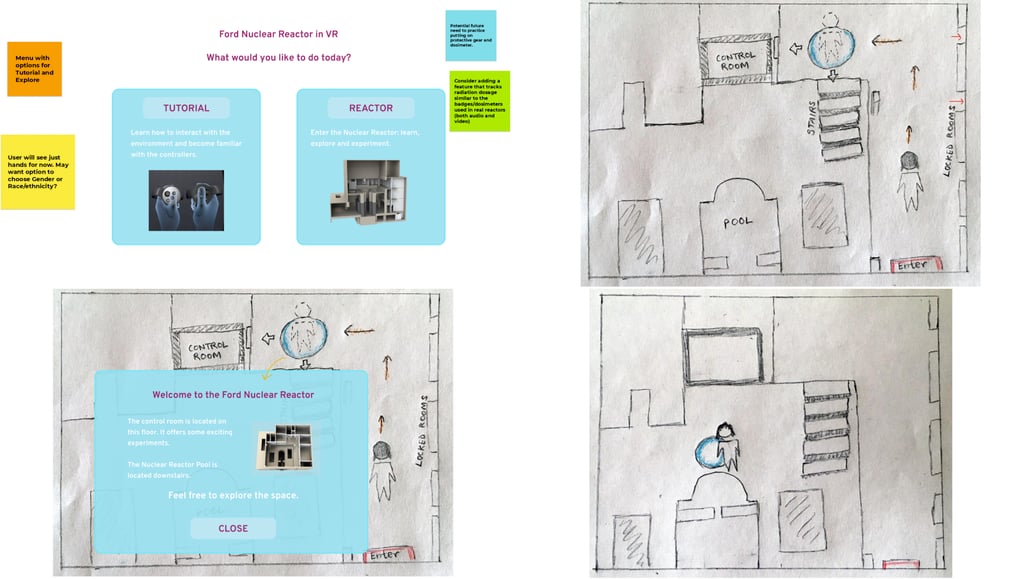

Interaction Design and Onboarding System

Because users ranged from students with no VR experience to more technically confident users, I designed a layered onboarding system that respected both groups. On first launch, users received a full interactive tutorial covering locomotion (teleportation), interaction with physical 3D objects, UI button presses, and control panel navigation. Students who wanted to skip directly to the experiments could do so, receiving only essential contextual guidance before proceeding.

This onboarding design went through multiple iterations with faculty review and student testing. The final system balanced thorough guidance with user agency, a key design principle for educational XR applications.

Experiment Framework Architecture

Four complete nuclear experiments were implemented inside a single continuous environment:

Iron Wire Activation - Demonstrates axial neutron flux profiles and reactor physics fundamentals.

Shutdown Power Level and Control Rod Calibration - Teaches control rod mechanics and subcritical measurement procedures.

Void and Temperature Reactivity Coefficient Measurements - Covers the reactivity feedback principles required by U.S. federal reactor safety regulations.

Xenon Transient - Simulates the behavior of Xenon-135, the most significant fission product affecting reactor dynamics.

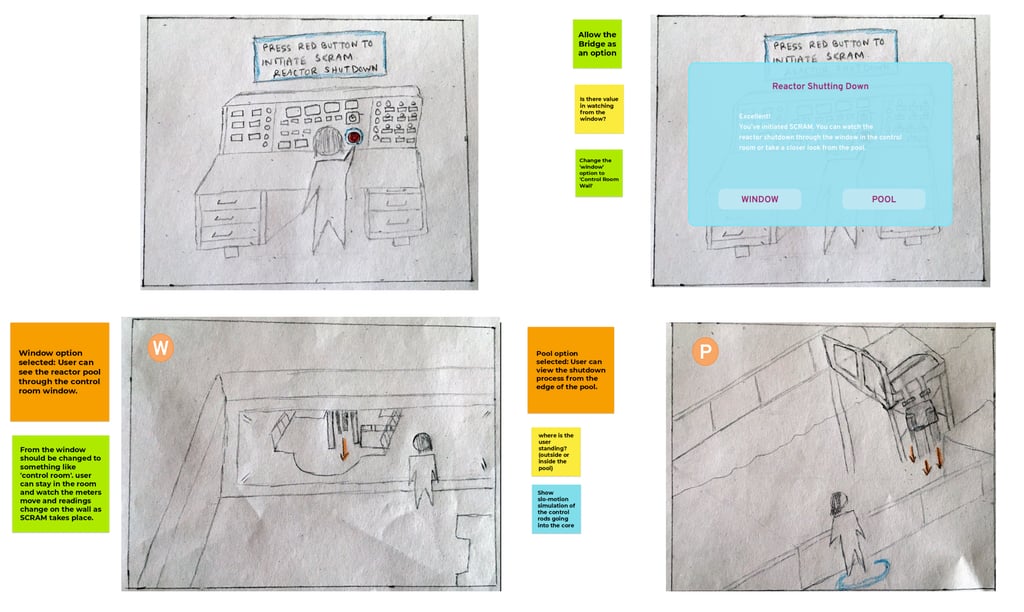

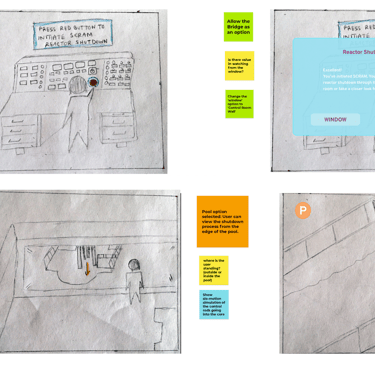

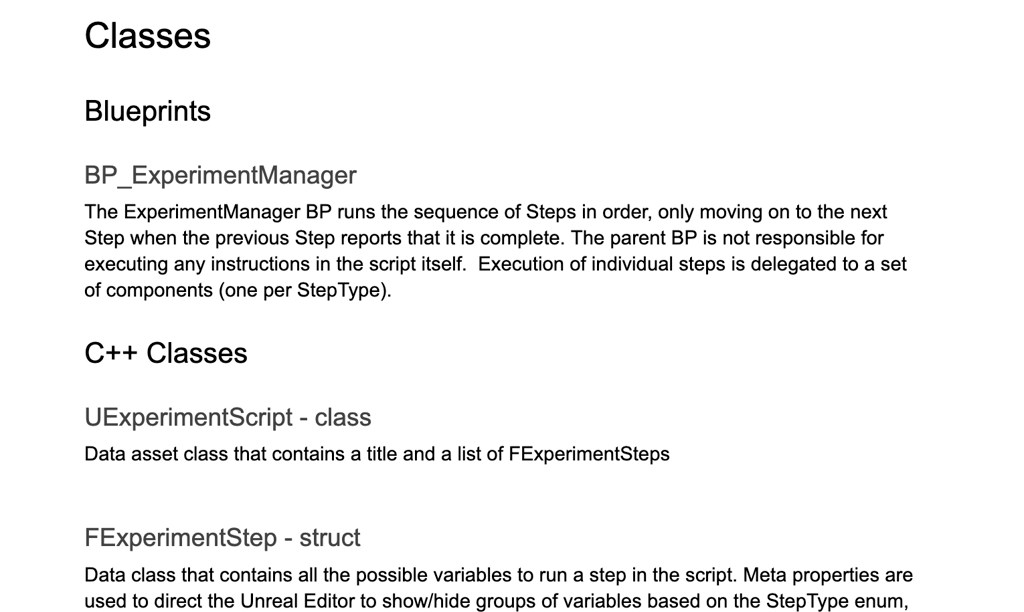

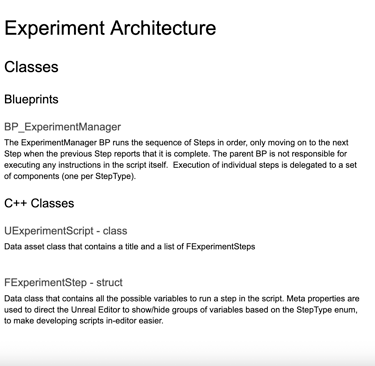

To support all four experiments within a shared environment, I co-developed a reusable experiment framework in Unreal Engine Blueprints, with targeted use of C++ for custom data structs and data assets. The framework supported modular step types including multiple-choice questions, physical object interaction tasks, UI button sequences, wayfinding prompts to different reactor locations, live reactivity graph readouts, and data recording triggers.

A state-based architecture tracked which experiment the user was in at all times and served the correct instructions, assets, and feedback for each step. This system made each experiment fully configurable through data rather than requiring redundant code, significantly reducing iteration time and allowing faculty to update experiment content independently after handoff.

User Testing and Iteration

The first two experiments went through the most substantial revision cycles. We conducted testing sessions with enrolled NERS students, gathering feedback on clarity of instructions, physical interaction affordances, and the coherence of the experiment flow. Each testing round surfaced concrete usability issues that were addressed in subsequent iterations. Student feedback directly shaped the final onboarding structure, the timing and placement of wayfinding cues, and the design of the multiple-choice question interface.

Deployment, Documentation, and Handoff

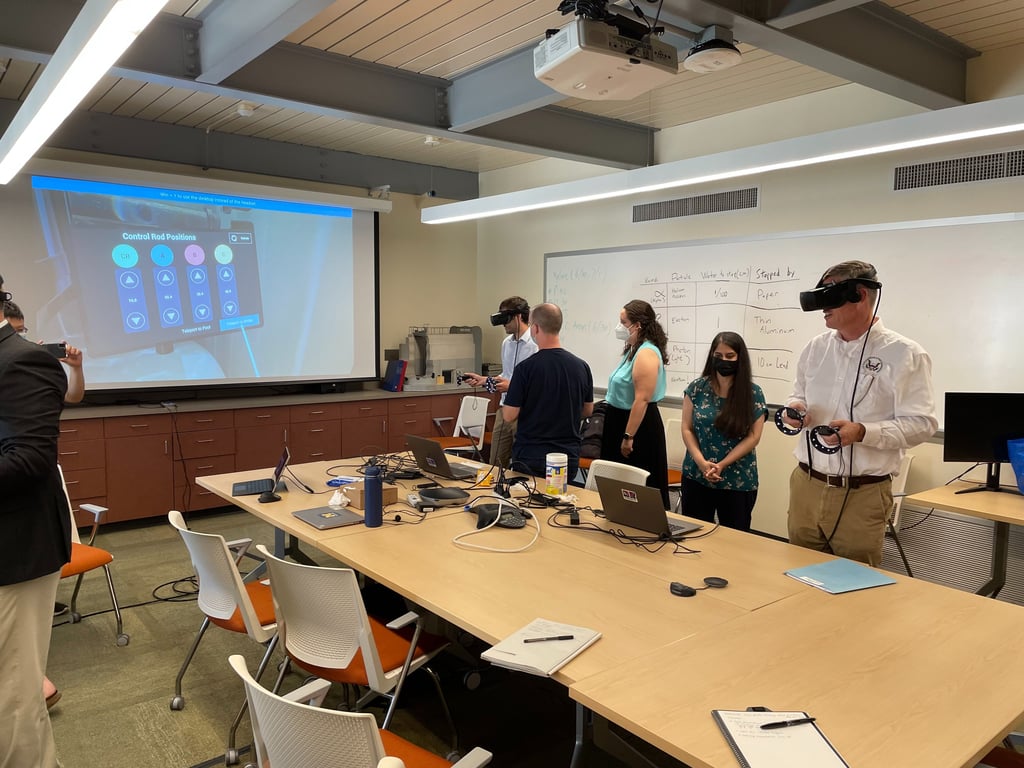

We managed a complete software development lifecycle from ideation and storyboarding through implementation, QA, deployment, and ongoing maintenance. At project close, we delivered full design and development documentation and hosted a technical workshop with the faculty team to transfer knowledge of the system's architecture, enabling them to continue development independently.

Technologies Used

Unreal Engine 4.27, Windows Mixed Reality (WMR) platform, HP Reverb G2 (tethered VR headset), Unreal Engine Blueprints, C++ (custom structs and data assets), FMU (Functional Mock-up Unit) integration, MATLAB / Simulink (simulation source, provided by NERS faculty), Custom Materials with Unreal Material Editor, 3D modeling pipeline integration

First Iteration

The first version of the experience established the core environment and the initial two experiments. Onboarding was minimal and assumed more prior VR knowledge than students actually had. The experiment flow was functional but lacked the reusable framework, requiring more bespoke implementation for each step. Student testing revealed significant friction in understanding when and how to initiate actions, prompting a full redesign of the instructional layer.

Below is a play through video of an earlier iteration of the Iron Wire experiment.

Second Iteration

Based on student and faculty feedback, the second iteration introduced the full layered onboarding system, the skippable tutorial, and the unified experiment framework. Instructions were repositioned to appear contextually at each step rather than front-loaded. Wayfinding cues were added to guide users between reactor locations during multi-location experiment steps. The Cherenkov pool shader was also refined in this phase to balance visual fidelity with frame rate stability.

Conclusion

The FNR VR simulation successfully revived a hands-on nuclear reactor laboratory experience that had been unavailable for nearly two decades. The project demonstrated that authentic scientific simulation can be embedded inside an immersive VR environment without sacrificing either educational rigor or experiential quality. The faculty integrated the simulation into existing NERS courses over multiple semesters, presented it at academic conferences, and continues to maintain the project.

The experience was celebrated by University of Michigan College of Engineering leadership and showcased at high-profile campus events, validating its value as both an educational tool and a demonstration of XR's potential in science education.

Future Perspectives

There is significant opportunity to expand this experience in several directions. The experiment framework is architected to support additional lab modules with minimal development overhead, making it feasible to extend the curriculum beyond the original four experiments. Broadening the intended audience to include introductory undergraduate students and K-12 learners would require a redesigned onboarding track and a simplified interaction model, both achievable within the existing system. Migration to a standalone headset platform such as Meta Quest would dramatically lower the hardware barrier and expand deployment potential. There is also clear potential for multiplayer or instructor-led modes, where a teacher could guide students through the reactor environment in a shared session, opening the door to classroom-scale use in universities and science education programs beyond the University of Michigan.